The Death of the Oracle: A Shift to Modular Intelligence

1. The Death of the Oracle

For the last few years, we have lived under the spell of the "Oracle." We were told (and we believed) that the ultimate evolution of Artificial Intelligence was a single, monolithic "God Model". This undifferentiated entity was expected to possess infinite breadth and depth, a digital polymath capable of drafting legal briefs, architecting microservices, and writing poetry in a single breath.

But as we moved from simple chat interfaces to production-grade agentic systems, the dream began to crack.

> The Generalist Fallacy

The industry has hit a wall. We have realized that treating a Large Language Model (LLM) as an all-knowing oracle is not just inefficient, but a fundamental architectural error known as the "generalist fallacy". In our quest for the one model to rule them all, we ignored some inconvenient truth: expertise is not just a pile of facts, but a specialized lens that observes a subject.

When you ask a generalist model to handle a complex, multi-faceted workflow, you are overwhelming its attention mechanism instead of simply giving it a task. You are asking it to be a legal scholar, a senior coder, and a creative director simultaneously. The result is a slow, steady degradation of performance. The "Oracle" is drifting.

The era of the monolithic generalist is ending. We are witnessing a seismic shift toward Modular Intelligence. We are moving away from the God Model and toward the "Team of Rivals"—a directed architecture where expertise is treated as a callable, modular resource.

2. The Villain: Cognitive Pollution

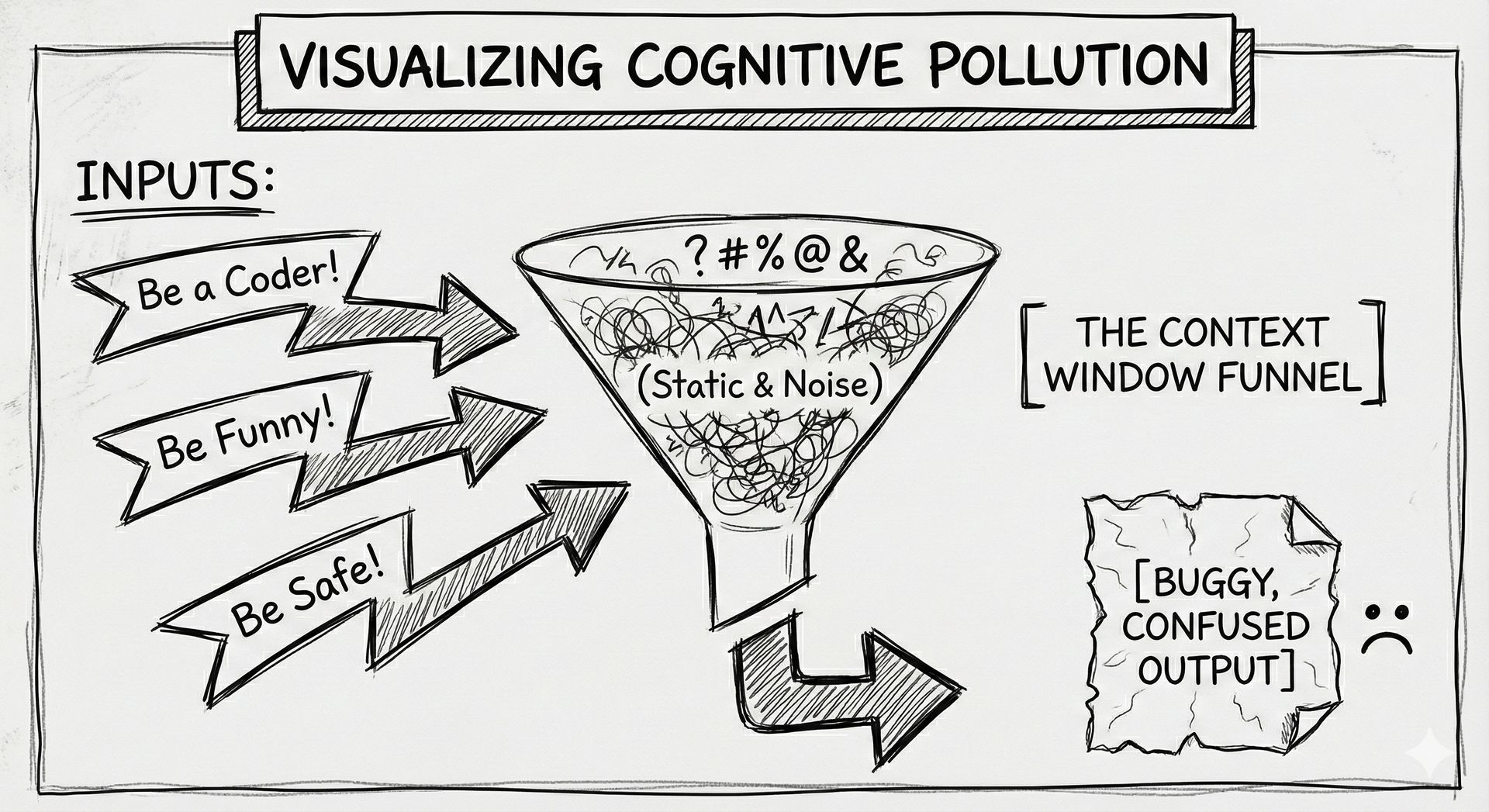

In the world of AI engineering, the most dangerous enemy is known as Cognitive Pollution.

Imagine a brilliant architect forced to work in a room where a hundred people are shouting different building codes, legal requirements, and interior design trends at them all at once (I’ve been to places like this, and trust me when I say it’s not great). Even the most capable mind would eventually suffer from attention degradation.

This is exactly what happens inside a context window when we use massive, bloated system prompts to force a model into being "everything".

The Hidden Cost: Prompt Drift As the conversation grows and the requirements pile up, the model’s focus begins to blur. The specific heuristics required for high-fidelity code generation get polluted by the linguistic styles of a copywriter or the restrictive safety layers of a compliance bot.

The model starts to "lose its mind" halfway through the task because its attention is being pulled in too many directions. In computational terms, we are polluting the latent space activation. By failing to isolate expertise, we ensure that no single domain is handled with the precision it requires.

/i

The Bloated Context (Don't do this)

Here is what Cognitive Pollution looks like in code. Notice how the system instructions conflict, pulling the model in three directions:

# THE ANTI-PATTERN: The "God Model" Prompt

system_prompt = """

You are an expert Backend Engineer, but also a Legal Compliance Officer

and a Marketing Copywriter.

1. Write a Python microservice for handling payments.

2. Ensure the code comments explain the legal ramifications of GDPR.

3. Write the variable names in a 'fun, zesty' marketing tone to keep developers engaged.

"""

# Result: The model produces buggy code, with dry comments, and confusing variable names.

This is the villain of our story: the inefficiency of the unmanaged context. It leads to suboptimal outputs, higher token costs, and systems that are impossible to debug. When your "God Model" fails, where do you start looking? How do you fix a mind that is trying to do everything and succeeding at nothing?

3. The Hero: The Modular Persona

Enter our protagonist: The Persona Pattern.

For too long, personas were seen as a simple gimmick, a way to make a chatbot sound like a pirate or a helpful assistant. But in high-fidelity agentic architecture, the persona is something much deeper. It is a fundamental architectural primitive.

Think of it as a Heist Crew. If you want to rob a digital vault, you don't hire one guy who's "pretty good" at everything. You hire the Driver, the Hacker, and the Muscle. Each has a specific role, a specific set of tools, and—most importantly—they stay out of each other's way.

The Persona Pattern allows us to decompose complex cognitive labor into discrete units. Instead of one overburdened agent, we build a Chain of Expertise.

The Semantic ZIP File: Understanding Prompt Economy

The true power of the persona lies in its function as a semantic compression algorithm. In the high-stakes economy of context windows, every token counts.

When you invoke a persona (telling the model, "Act as a Senior Python Solutions Architect"), you are not only setting the tone, but using a semantic key to unlock a massive repository of associated behaviors, heuristics, and standards stored in the model's latent space.

Without the persona abstraction, you would have to explicitly list every coding standard, every review methodology, and every architectural preference. You would burn thousands of tokens before the model even started the task. The persona allows you to "unzip" 10,000 tokens of hidden expertise with a single sentence. It is the ultimate tool for prompt economy.

Exercise: The Semantic ZIP

Try this in your own playground to see the difference in output fidelity:

Prompt A (Generic): "Write a function to connect to a database."

Prompt B (Persona-Driven): "Act as a Senior Database Reliability Engineer focused on high-concurrency environments. Write a Python function to connect to a PostgreSQL database using a connection pool."

Notice how Prompt B "unzips" error handling, connection limits, and retry logic without you explicitly asking for them.

The Lens of the Latent Space

Mathematically, a persona is a steering mechanism. It narrows the model's probability distribution to a specific cluster of linguistic and logical patterns associated with that identity.

When you activate the "Security Engineer" persona, the model doesn't just "know" about vulnerabilities; it adopts a paranoid, verification-heavy cognitive style. When you switch to the "UX Designer," it adopts an empathetic, user-centric framework. In this sense, we are using meta-cognition through role switching.

By treating expertise as a modular resource, we gain three critical advantages:

Reliability: Specialized agents are anchored by their roles, making them less prone to drift.

Explainability: In a "Chain of Agents," you can audit exactly where a failure occurred. If the code is buggy, you debug the "Coder" persona, not the entire system.

Emergent Capability: A persona can activate reasoning patterns that you, the developer, might not even know how to describe explicitly.

The modular persona evolves from a simple prompt. It extrapolates this perspective to become a building block of the first generation of agentic professional teams.

The Builder’s Toolkit: Where to Start

If you are ready to kill the Oracle and build your own Heist Crew, here are the resources we can use in production.

1. The Frameworks (Python)

For Beginners (Role-Based): CrewAI. This library was practically built for the "Heist Crew" metaphor. It allows you to define Agents, Tasks, and Processes (sequential or hierarchical) with very little boilerplate.

For Control (Graph-Based): LangGraph. If you need cycles (loops), persistence (memory), and complex state management, this is the industry standard. It treats your agent system as a graph where nodes are actions and edges are decisions.

The Unsung Hero: Pydantic. You cannot build reliable agents without structured data. Pydantic is the bedrock of valid data exchange between your personas.

2. The Must-Read

"Building Effective Agents" by Anthropic: This is arguably the highest-signal paper on the topic. It moves beyond hype and defines clear patterns like "The Orchestrator-Workers" and "The Evaluator-Optimizer."

The "Instructor" Library: A Python library that forces LLMs to output structured data (JSON) rather than text, effectively preventing "yapping" and keeping your personas focused on the payload.

3. A Quick Start Heist Don't over-engineer it. Start with two prompts in a simple Python script:

The Critic: "You are a senior code reviewer. List 3 critical flaws in this snippet."

The Fixer: "You are a senior engineer. Fix the code based only on the Critic's feedback." Pass the output of 1 into 2. Congratulations, you just built your first modular system.