Starting from where we lift off yesterday, we deep diver in the role of Danny Ocean (the Architect) and how we could develop this pattern for real-life applications.

4. The Floor Plan: Architecting the Job

Once we admit the "One God-Like Genius" model is a myth, we’re left with a logistics problem. We have our crew, but we have to decide how they talk to each other. What would be their lingua franca, or their messaging system? You don’t just shove them all in the van and hope they figure it out, because you know this won’t work out. You need a floor plan.

In the engineering world, we call this Topology. It’s the flow of intelligence.

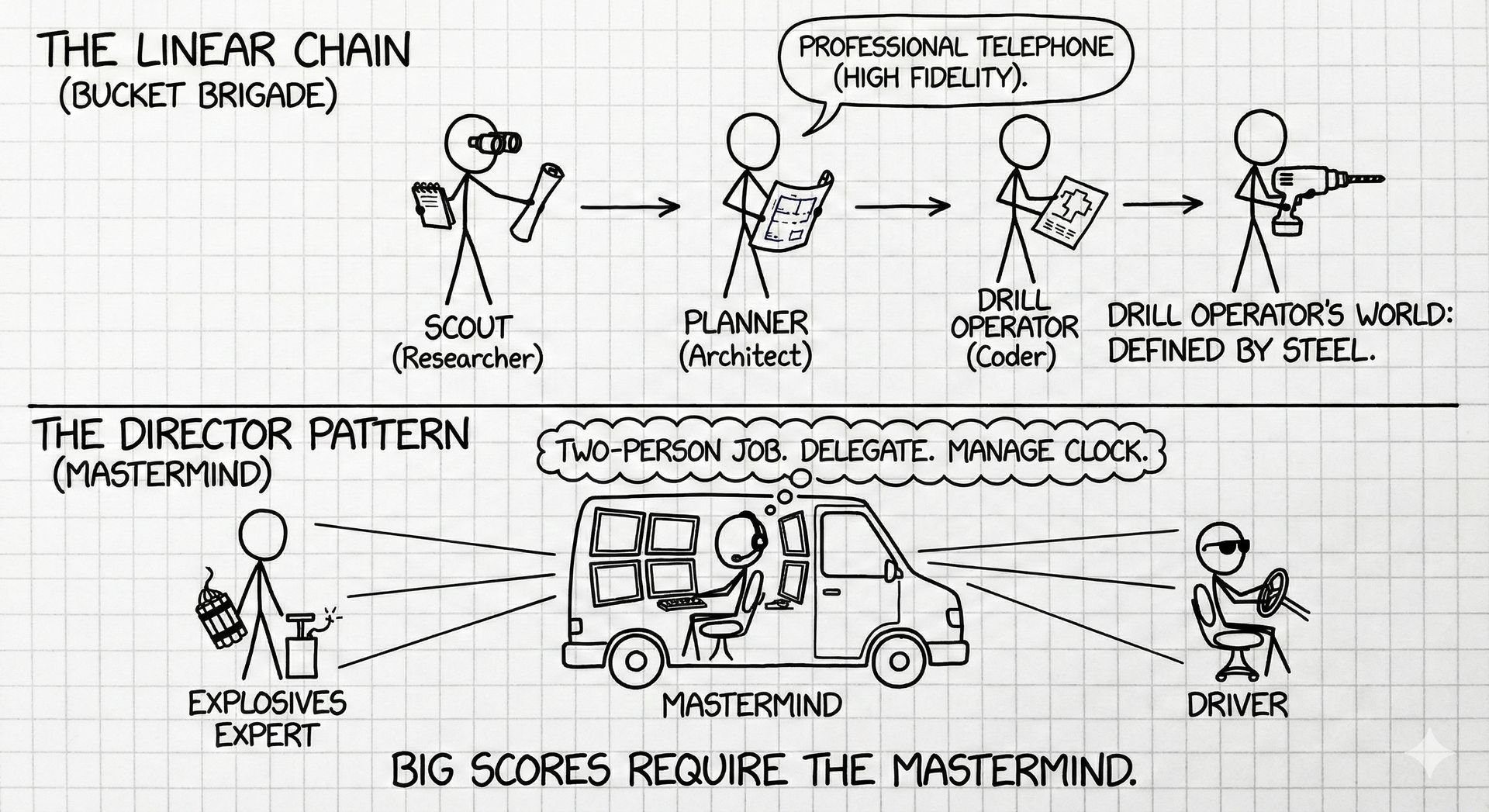

The simplest setup is the Linear Chain. Think of it like a bucket brigade. The Scout (Researcher) cases the joint and passes the blueprints to the Planner (Architect), who hands the schematics to the Drill Operator (Coder). It’s professional telephone, but with high fidelity. The Drill Operator doesn’t need to worry about the guard shift patterns; they just need to know where to drill. Their world is defined entirely by the steel in front of them.

But big scores require the Director Pattern. This is where we bring in the Mastermind. This agent doesn't touch the vault. It sits in the van with the monitors, routing comms. It looks at the problem and decides: "This is a two-person job. I need the Explosives Expert and the Driver." It delegates, manages the clock, and ensures nobody goes rogue.

The most advanced setup is the Directed Acyclic Graph (DAG). This moves us away from taking turns and toward a swarm. Imagine the Hacker killing the silent alarm at the exact same moment the Grifter is distracting the bank manager. They are working in parallel, on different vectors, while the Mastermind watches both feeds to ensure the timing aligns. It prevents the bottleneck of a single mind and mimics a high-functioning human team.

5. Operational Security: The "Need-to-Know" Basis

If the villain of our story is Cognitive Pollution, our defense isn't a surgical mask, but a strictly enforced Operational Security.

When you use a generalist model, it’s like discussing the plan in a crowded dive bar. The model is trying to ignore the noise of the room while listening to your instructions. The Persona Pattern fixes this through Context Isolation. In the AI Engineering, we call this "Need-to-Know."

We treat every step of the chain as a separate safe house. We don’t hand the entire history of the job to every specialist. The Wheelman doesn't need the vault combination; he just needs the pickup time. When we prompt an agent, we give them the Semantic Minimum: their specific role and the exact data they need to execute it. Nothing more.

This prevents "Persona Drift." If you let the Demolitions Expert chat too long with the Legal Compliance officer, suddenly your explosions come with liability waivers. By enforcing a hard air gap between these minds, we keep the signal pure. If the job goes south, you know exactly who dropped the ball (also because of logging and tracing). You can debug the Scout without having to retrain the Driver.

A simple Python implementation of what is described could go like this:

from typing import Dict, Any, List

from dataclasses import dataclass

from enum import Enum

class AgentRole(Enum):

SCOUT = "scout"

DEMOLITIONS = "demolitions"

WHEELMAN = "wheelman"

LEGAL = "legal"

@dataclass

class SemanticContext:

"""The minimal context for a role - enforces need-to-know"""

role: AgentRole

task_data: Dict[str, Any]

instructions: str

class OperationalSecurityChain:

"""

Strict need-to-know basis: Each agent gets ONLY their semantic minimum.

Prevents persona drift through context isolation.

"""

def __init__(self):

self.execution_log: List[Dict[str, Any]] = []

# Define what each role is allowed to see

self._boundaries = {

AgentRole.SCOUT: ["target_location", "recon_requirements"],

AgentRole.WHEELMAN: ["pickup_time", "route", "vehicle_spec"],

AgentRole.DEMOLITIONS: ["entry_point", "material_specs", "timing"],

AgentRole.LEGAL: ["jurisdiction", "compliance_requirements"]

}

self._instructions = {

AgentRole.WHEELMAN: "Driver. Focus on timing/routes. No vault codes.",

AgentRole.DEMOLITIONS: "Handle entry. Execute precisely. No liability talk.",

AgentRole.SCOUT: "Recon only. Report what you see.",

AgentRole.LEGAL: "Compliance review. Don't design operations."

}

def execute_agent(self, role: AgentRole, full_data: Dict[str, Any]) -> Dict[str, Any]:

"""

Execute agent with air-gapped context.

The Wheelman never sees the vault combination.

"""

# Extract only allowed data - the "semantic minimum"

filtered_data = {k: v for k, v in full_data.items()

if k in self._boundaries[role]}

context = SemanticContext(role, filtered_data, self._instructions[role])

# Call LLM with isolated context

prompt = f"{context.instructions}\n\nData: {context.task_data}"

result = {"status": "executed", "role": role.value} # llm.complete(prompt)

# Log for surgical debugging

self.execution_log.append({

"role": role.value,

"input": filtered_data,

"output": result

})

return result

def debug(self, role: AgentRole) -> List[Dict[str, Any]]:

"""Debug the Scout without retraining the Driver"""

return [log for log in self.execution_log if log["role"] == role.value]

# Usage

if __name__ == "__main__":

chain = OperationalSecurityChain()

full_job = {

"target_location": "Bank Vault #7",

"vault_combination": "12-34-56", # Wheelman WON'T see this

"pickup_time": "23:45",

"route": "Highway 101 North",

"vehicle_spec": "Black sedan",

"entry_point": "Basement access",

"material_specs": "C4, 2kg",

"timing": "23:30"

}

# Each specialist sees ONLY their data

chain.execute_agent(AgentRole.WHEELMAN, full_job)

# Wheelman saw: pickup_time, route, vehicle_spec

chain.execute_agent(AgentRole.DEMOLITIONS, full_job)

# Demolitions saw: entry_point, material_specs, timing

# NO liability concerns from Legal polluting their execution

# Surgical debugging

print(chain.debug(AgentRole.WHEELMAN))6. The Shorthand of Experts

We also have to talk about the budget. In the LLM world, context is currency. Every token you waste explaining the basics is a token you can’t use for the actual job (or it means a very expensive bill in your API).

This is where the Persona acts as a Semantic Compression Algorithm. It’s operational shorthand.

When you hire a veteran Safecracker, you don’t need to explain how a tumbler lock works. You don’t need to tell them to "be careful" or "listen for the click." You just point at the safe. That single title—"Veteran Safecracker"—unpacks a massive, pre-existing library of skills, instincts, and standards.

Without the persona, you’re forced to micromanage. You have to explicitly define the "how" of every movement. You’re essentially training the rookie on the fly, burning valuable time and patience. The persona allows us to unzip that expertise on demand. We are saving time by simply activating "Cognitive Primitives" stored in a registry.

7. The Black Book: Industrializing the Underworld

As your crew grows from three specialists to an organization of hundreds, you hit a new wall: management debt.

In the early days, we hardcoded our personas directly into the app. That’s like writing your contact’s phone number on a bathroom wall. It’s messy, unsecure, and doesn't scale.

To solve this, we are seeing the rise of PersonaOps. Think of this as the digital equivalent of "The Black Book"—a centralized, vetted registry of talent. Your application doesn't contain the prompt for the "Senior Python Dev." Instead, it makes a call to the registry for python-dev:v2.4.1.

This allows us to upgrade the skills of the entire organization without touching the production code. If a new cracking tool hits the market (a new library release), we update the persona in the Black Book. Immediately, every crew member using that persona knows how to use the new gear.

from typing import Dict, Any, Optional

from dataclasses import dataclass

from enum import Enum

class PersonaStatus(Enum):

DRAFT = "draft"

VETTED = "vetted"

PRODUCTION = "production"

class GovernanceLevel(Enum):

STANDARD = "standard"

CRITICAL = "critical" # Compliance, Financial - can't hallucinate

@dataclass

class PersonaVersion:

"""A versioned persona in The Black Book"""

persona_id: str

version: str

prompt_template: str

capabilities: list[str]

governance_level: GovernanceLevel

status: PersonaStatus = PersonaStatus.DRAFT

def get_ref(self) -> str:

return f"{self.persona_id}:v{self.version}"

class PersonaRegistry:

"""

The Black Book: Centralized registry of vetted AI personas.

Production code contains NO prompts - only references.

"""

def __init__(self):

self.personas: Dict[str, Dict[str, PersonaVersion]] = {}

self.critical_tests = [

"hallucination_detection", # Compliance can't invent loopholes

"accuracy_validation",

"bias_detection"

]

def register(self, persona: PersonaVersion):

"""Add to The Black Book"""

if persona.persona_id not in self.personas:

self.personas[persona.persona_id] = {}

self.personas[persona.persona_id][persona.version] = persona

def vet(self, persona_id: str, version: str) -> bool:

"""Run the gauntlet - background check before hiring"""

persona = self.personas[persona_id][version]

if persona.governance_level == GovernanceLevel.CRITICAL:

print(f"🔍 Running critical vetting for {persona.get_ref()}")

for test in self.critical_tests:

print(f" ✓ {test}")

persona.status = PersonaStatus.VETTED

return True

def promote(self, persona_id: str, version: str):

"""Deploy to production - upgrades entire organization"""

persona = self.personas[persona_id][version]

persona.status = PersonaStatus.PRODUCTION

print(f"🚀 {persona.get_ref()} deployed")

print(f" All crews now have: {', '.join(persona.capabilities)}")

def get(self, persona_id: str, version: Optional[str] = None) -> PersonaVersion:

"""Fetch from registry - not from bathroom wall"""

if version:

return self.personas[persona_id][version]

# Get latest production version

versions = [p for p in self.personas[persona_id].values()

if p.status == PersonaStatus.PRODUCTION]

return max(versions, key=lambda p: p.version)

class Application:

"""Production app - references The Black Book, never hardcodes prompts"""

def __init__(self, registry: PersonaRegistry):

self.registry = registry

def execute(self, persona_ref: str, context: Dict[str, Any]) -> str:

"""

Call: app.execute("python-dev:v2.4.1", {...})

Not: app.execute("You are a Python developer who...")

"""

persona_id, version = persona_ref.split(":v")

persona = self.registry.get(persona_id, version)

if persona.status != PersonaStatus.PRODUCTION:

raise ValueError(f"{persona_ref} not production-ready")

prompt = persona.prompt_template.format(**context)

# In production: return llm.complete(prompt)

return f"Executed with {persona.get_ref()}"

# Usage

if __name__ == "__main__":

registry = PersonaRegistry()

# Version 1.0 - Basic capabilities

auditor_v1 = PersonaVersion(

persona_id="financial-auditor",

version="1.0",

prompt_template="Audit: {data}. Cite all sources.",

capabilities=["audit", "compliance"],

governance_level=GovernanceLevel.CRITICAL

)

registry.register(auditor_v1)

registry.vet("financial-auditor", "1.0")

registry.promote("financial-auditor", "1.0")

# New library released! Upgrade The Black Book

print("\n📢 FraudGuard v3 library released!\n")

auditor_v2 = PersonaVersion(

persona_id="financial-auditor",

version="2.0",

prompt_template="Audit: {data}. Use FraudGuard v3 for ML detection.",

capabilities=["audit", "compliance", "ml_fraud_detection"], # NEW

governance_level=GovernanceLevel.CRITICAL

)

registry.register(auditor_v2)

registry.vet("financial-auditor", "2.0")

registry.promote("financial-auditor", "2.0")

# Production code unchanged - automatically uses new version

app = Application(registry)

app.execute("financial-auditor:v2.0", {"data": "Q4 transactions"})

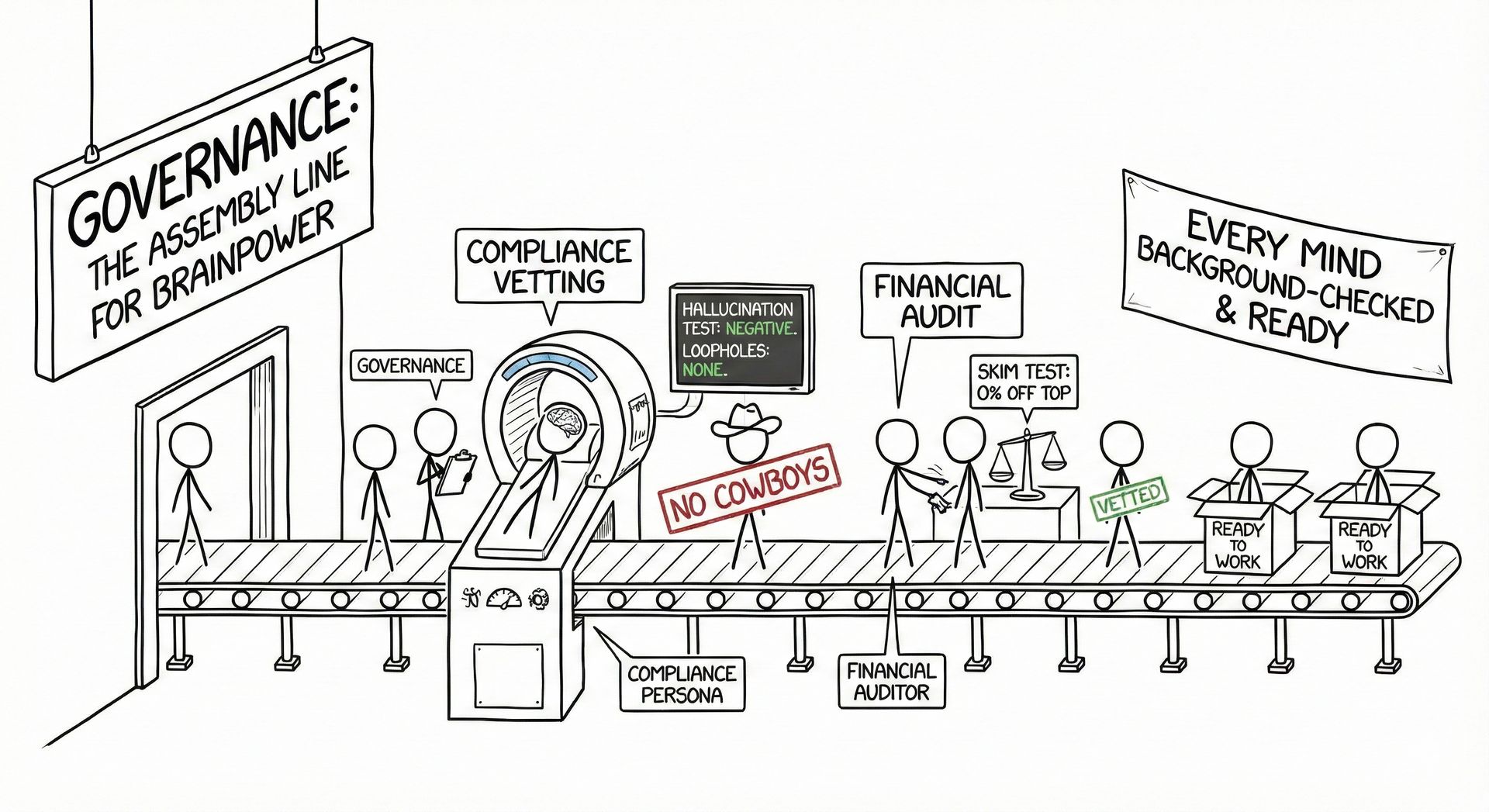

print("\n💡 Entire organization upgraded. Zero production code changes.")It also introduces Governance. In a professional heist, you can’t have cowboys. A "Compliance" persona must never hallucinate a loophole. A "Financial Auditor" can't skim off the top. By centralizing these identities, we run them through a gauntlet of tests before they ever get a job offer. It’s an assembly line for brainpower, ensuring that every mind we hire has been background-checked, vetted, and is ready to work.

The Blueprints (Research Papers)

ChatDev: Communicative Agents for Software Development – The academic proof of the "production line" topology. It demonstrates how assigning specific roles (CEO, CTO, Coder, Tester) in a waterfall structure dramatically reduces bugs compared to a single agent.

MetaGPT: Meta Programming for Multi-Agent Collaborative Framework – This paper introduced the concept of encoding Standard Operating Procedures (SOPs) into agents, effectively "The Black Book" of industrial-grade agent coordination.

Generative Agents: Interactive Simulacra of Human Behavior – The famous "Smallville" paper. Essential reading for understanding memory streams and how agents interact socially without a central director.

The Manuals (Essential Reading)

LLM Powered Autonomous Agents by Lilian Weng (OpenAI) – Considered the "Bible" of agent architecture. It breaks down the anatomy of an agent into Planning, Memory, and Tool Use.

Building Effective Agents by Anthropic – A practical guide that argues for simple, composable patterns (like the "Orchestrator-Workers" model) over complex, black-box frameworks.

Agentic Design Patterns by Andrew Ng – A series of letters defining the four pillars of agentic workflows: Reflection, Tool Use, Planning, and Multi-agent Collaboration.

The Masterclass

This conversation between Anthropic's researchers is highly relevant because they dismantle the "One God-Like Genius" myth in favor of the "Orchestrator-Workers" topology (The Director Pattern).